Industrial AI Accelerator

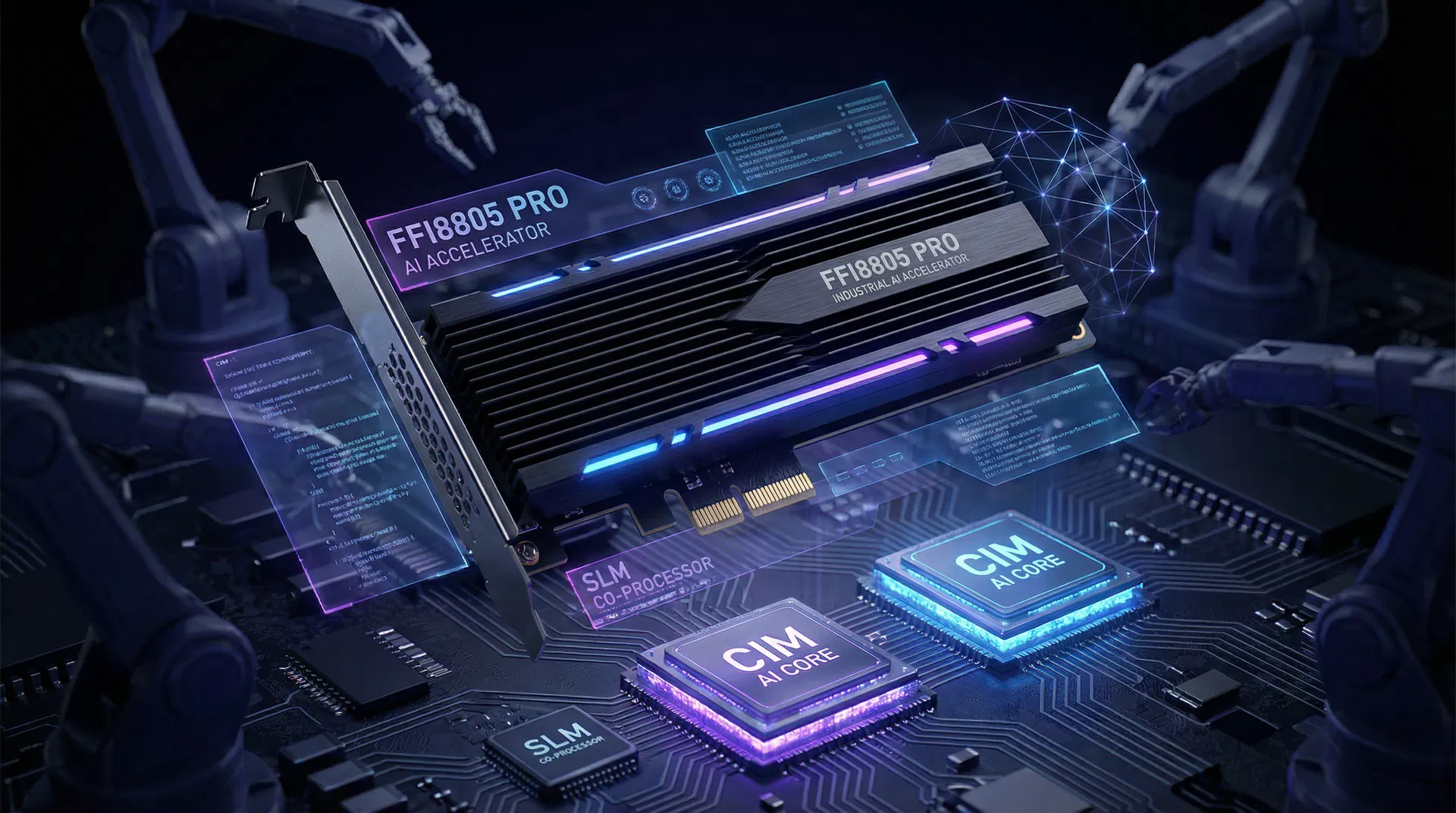

Industrial AI AcceleratorFFI8805 Pro

Full CIM In-Memory Computing AI Edge Inference Engine

A full CIM in-memory computing AI accelerator designed for industrial automation, automotive systems, and medical devices, achieving ultimate energy efficiency through in-memory computation.

Three Core Advantages

FFI8805 Pro adopts full CIM in-memory computing architecture for industrial-grade edge intelligence without additional processors.

Full CIM In-Memory Architecture

All computation performed within SRAM-CIM arrays, eliminating data movement bottleneck for ultimate energy efficiency and low-power inference.

Industrial-Grade Reliability

Wide temperature design from -40°C to 105°C, TEE secure boot, compliant with industrial EMC standards.

Hierarchical Memory

SRAM + 2GB LPDDR5 dual-layer memory architecture supporting layered weight loading for large models.

Full CIM In-Memory Architecture

FFI8805 Pro adopts pure CIM architecture where all AI computation is performed directly in memory, requiring no additional RISC-V or NPU co-processors.

CIM Inference Core

Dual SRAM-CIM arrays supporting CNN/Transformer/SLM in-memory inference.

CIM Inference Engine

Natively supports INT4/INT8 quantized language model inference through CIM arrays, no external processor required.

Unified Memory

2GB LPDDR5 unified memory dedicated to CIM model weights and inference buffers.

Full CIM vs Traditional NPU Architecture

FFI8805 Pro uses a full CIM compute-in-memory design, embedding computation directly into memory arrays to fundamentally eliminate data movement bottlenecks. Below is a key metric comparison with traditional RISC-V + NPU architecture.

FFI8805 Pro (Full CIM)

Compute-in-Memory Architecture

Traditional RISC-V + NPU

Conventional Discrete Architecture

No Memory Wall

Computation happens inside memory, no data transfer needed

Ultra-Low Power

TDP < 3W, energy efficiency up to 8 TOPS/W

Simplified System Design

No external RISC-V or NPU needed, lower BOM cost

Compact Package

Die area only 22mm², ideal for space-constrained scenarios

Data Sources & References

[1] ISSCC 2024 — SRAM-CIM Architecture Power & Performance Comparison Study

[2] IEEE JSSC 2023 — Computing-in-Memory vs Traditional NPU Energy Efficiency Analysis

[3] Nature Electronics 2023 — Compute-Storage Fusion Architecture Efficiency & Area Advantages

Data based on published academic research and internal testing. Actual performance may vary by workload and conditions.

CIM Technology Whitepaper

Explore the technical principles of full CIM architecture, performance benchmarks, and application analysis. Subscribe to be notified when the whitepaper is available.

Detailed Specifications

Members-Only Technical Data

Log in to view full specifications, benchmarks, and application scenarios.